This is particularly ideal for websites/blogs which support pagination. The Scrapy framework allows you to scrape data through the use of “web spiders” – a small script designed to collect data and traverse hyperlinks as and when they are discovered on the page. Scrapy provides a lot more in terms of functionality by comparison.

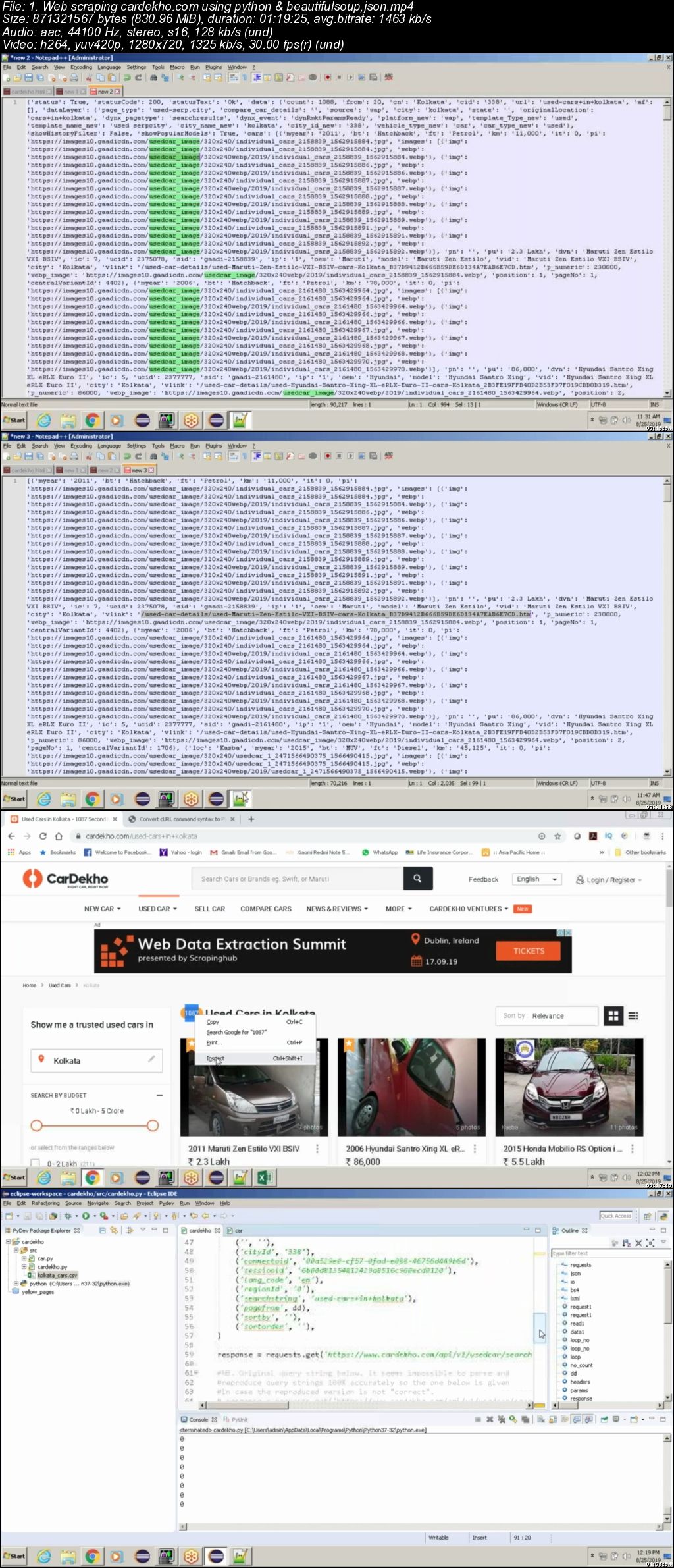

Unlike BeautifulSoup, Scrapy is an entire web scraping framework designed for scraping data at scale as opposed to simple tasks like the one shown above. Soup = BeautifulSoup(r.text, 'html.parser') In this case, the following code is used to extract all hyperlinks (anchor tags) from a website. Once installed, BeautifulSoup can be used to start scrapping. It also supports many different types of parser (including HTML and lxml).īeautfulSoup can be installed by running the following command… $ pip install beautifulsoup4 The BeautifulSoup website says that “it commonly saves programmers hours or days of work” which is certainly the case when it comes to web scraping. It’s easy to install and get going plus, it integrates well with requests. BeautifulSoupīeautfulSoup is the go-to package for many Python web scrapers. This blog post focuses on four of the most widely-used and well-known Python packages for parsing HTML documents which are essential to your web scraping toolbox in 2022.

For this reason, a separate HTML parser is needed. While the requests package can do many things, it can’t parse HTML documents. The combination of requesting and parsing a HTML document from a web server produces a web scraper. In most cases, requests is used to receive the raw HTML document generated by a web server which, in turn, can be parsed to extract or “scrape” the relevant information. JSON, CSV, HTML files).įurthermore, requests support the ability to add parameters and header information to simulate the behaviour of a web browser such as Chrome or Firefox. These methods can be used for sending and receiving data over the Web and for accessing various resources (e.g. The requests package enables users to perform basic HTTP requests using methods such as GET, POST, etc. One thing Python is particularly well-known for is its ability to receive data over the Web using the requests package (more information can be found here). The following instance methods and properties are available to access the scraped data.Python is known for being able to do many different things due to the versatility of the programming language. If there are additional data items you think should be scraped, please submit an issue or even better go find the xml path and submit a pull request with the changes. search for all listings posted in the past 24 hours, and schedule the scrape to run daily.įinally, note that not every piece of data listed on the rightmove website is scraped, instead it is just a subset of the most useful features, such as price, address, number of bedrooms, listing agent. Add a search filter to shorten the timeframe in which listings were posted, e.g.perform a search for each London borough instead of 1 search for all of London.

Reduce the search area and perform multiple scrapes, e.g.A couple of suggested workarounds to this limitation are: "all rental properties in London"), in practice you are limited to only scraping the first 1050 results (42 pages * 25 listings per page = 1050 total listings). Therefore if you perform a search which could theoretically return many thousands of results (e.g. However please note that rightmove restricts the total possible number of results pages to 42. When a RightmoveData instance is created it automatically scrapes every page of results available from the search URL. From rightmove_webscraper import RightmoveData url = "" rm = RightmoveData( url) What will be scraped?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed